ELIZA to ChatGPT: The Evolution and Impact of Voice-Driven AI Interfaces

Understanding how voice driven interfaces take human machine interactions to the next level of bandwidth, continuity and ubiquity

TL;DR

Voice input/output features in ChatGPT's latest release have significantly enhanced user interaction, suggesting a transformative potential for voice search amidst the current bandwidth and computing capabilities.

Historical progression of voice assistants, starting with ELIZA to the advent of Siri, Alexa, and others, has paved the way for voice interaction to become a mainstream method of engaging with technology.

Voice chat offers critical advantages, including omnipresent access to information (ubiquity), enriched auditory experiences via spatial audio, efficient information exchange outpacing traditional typing speeds, and a nuanced understanding of context and continuity in conversations.

In terms of practical application, voice-driven interfaces promise to revolutionize both consumer and enterprise sectors, with use cases ranging from on-demand specialized services to enhanced cross-device communication, mirroring natural human interactions with professionals.

The current landscape is ripe for voice-driven interfaces, given the substantial improvements in computing power, network bandwidth, and the quality of speech input/output devices.

Future developments in voice interaction are expected to focus on deep personalization, seamless integration across multiple devices, and the ability to interpret non-verbal cues, further enhancing the natural nature and empathy of human-machine communication.

Inspiration

I recently tried the voice input / output features from the latest ChatGPT release, focussed on having a continuous conversation instead of typing text on the mobile app or the desktop / laptop. It was an enthralling experiences for me and having spun up 100s of voice conversations on the mobile interface through voice and image mediums, I realized that this has facilitated an increase and improvement in my interactions, while opening up the neural pathways in my brain that were othewise unexplored. I wanted to dissect and articulate how voice search could be transformational, given where we are with bandwidth and compute availability in modern internet era.

Walking down the history lane

Since the internet's inception in 1983 and the rise of the World Wide Web with over 100,000 websites by 1995, text and images have dominated as the primary means of communication. The first voice assistant, ELIZA, was created by Joseph Weizenbaum at MIT in 1966, but internet-based voice assistants like Alexa, Siri, Cortana, and Google Assistant only gained prominence between 2014 and 2016. Initially used on laptops, these assistants transitioned to phones and smart home devices, commonly for setting reminders, sending texts, making calls, controlling smart devices, and performing basic web searches. Recent advancements in this field owe much to improved speech-to-text models, high-performance computing (GPUs & TPUs), and enhanced internet bandwidth.

Drawing an analogy

The analogy between indexed internet search and conversational AI-driven search, especially with the introduction of voice, can be summarized as follows: In pre-internet times, information was disseminated through libraries, the central knowledge repositories containing scrolls and books, akin to modern databases which were indexed by librarians and read by scholars & tutors. Personal tutors, knowledgeable in these texts, provided verbal instruction, crucial in societies with low literacy and a strong oral tradition. ChatGPT can be looked as resembling an ancient well-read scholar, uses its extensive training data to answer queries, similar to historical advisors. Voice interaction echoes this oral tradition, offering a natural way to access information, akin to learning from a tutor. However, historically, such resources were often limited to the privileged few, whereas now, having a virtual tutor accessible anytime and covering a vast array of topics democratizes knowledge, especially for the least privileged.

What’s interesting about voice chat?

The four key things that currently make or can make voice chat transformational are ubiquity, spatial audio, improved bandwidth of information exchange and contextual understanding & continuity. The sum of these parts exceeds their individual value:

Ubiquity: The mobile revolution has made it possible to access information anytime and anywhere, enhancing internet interaction. I use mobile apps like Google Keep and Assistant for on-the-go information search and thought journaling. This often comes from thoughts sparked while walking, driving, or socializing. Outdoor activities, like walking, boost brain function and creativity due to increased blood flow and sensory stimulation. It also promotes neuroplasticity – the brain's ability to form and reorganize synaptic connections. People often walk to clear their thoughts and then record them on a device. It’s also common, albeit inconvenient for people to walk and read a physical book, Kindle, iPad or their phones to gain information. However, shifting attention to a device can disrupt the thought process and learning. Using voice interaction can reduce this disruption, by simplifying the communication to a natural conversation, which is very palpable to humans.

Spatial Audio: Building on the previous topic, Apple's AirPods, is poised to generate over $14.5 billion in 2023, placing it greater than Twitter, Shopify or Spotify. They enhance auditory experiences with spatial audio, which stimulates brain areas linked to spatial and auditory processing. While we’re scratching the surface of voice search using ChatGPT, spatial audio has the capability to conduct a seamless back and forth conversation between the human and assistant, rendering & ingesting information using earbuds or other voice outputs, deeply integrating with one’s computing devices, mimicking how we experience sound in the real world by engaging the brain's auditory processing pathways more effectively than traditional stereo sound. Meta’s Rayban smart glasses is a fantastic example of this, wherein one can chat with Llama 2 powered Meta AI while wearing the glasses on the go. The triggers can be both user initiated, such as “Hey Meta!” for the Rayban glasses, or service initiated such as notifications on one’s iPhone casted to AirPods. While ChatGPT is solely user initiated at this point, there is a ton of exploration to be done, to find various triggers of voice initiated human machine interaction. Bill Gates also talks about this in his latest blogpost on AI agents along with various use cases. Bill Gurley & Phil Rosedale also talks about the importance of spatial audio in this Invest Like the Best episode with Patrick O’Shaughnessy, when talking about the audio metaverse and why applications like Clubhouse & Twitter spaces bring this concept to life.

Improved bandwidth for information exchange: For someone with large fingers, typing on a QWERTY keyboard can be inefficient and error-prone, hindering activities like web searches or messaging. Conventional keyboards may not effectively convey speech nuances and can be difficult for those with disabilities. Voice interaction, a more natural method, can be faster and more accessible, allowing multitasking and reducing physical strain from typing. The average person speaks at approximately 150 words per minute, while the average typing speed is around 40 words per minute. Voice communication is a natural human ability, requiring no special training or adaptation. Furthermore, voice interaction greatly enhances accessibility. Combining ubiquity and efficiency, it enables multi-tasking efficiently wherein one is able to retrieve information while cooking, driving or exercising.

Contextual understanding & continuity: While modern day search engines facilitate recommendations that get personalized based on one’s search history over a period of time, voice on the other hand is able to transform information retrieval into a continuous chain of thought, which takes into account previous conversations as almost perfect memory (commonly known as context window). For example, instead of starting a fresh search for a topic that I’ve previously searched on, I pick up a former conversation from my chat history to re-visit and build on the chain of thought. While this may reduce the diversity of thought in some cases, it helps retrieve historical CoTs and builds on them, fully utilizing the initial context, and maintaining continuity over multiple interactions. Essentially, one is continuing where they left off in a very coherent manner, that could potentially be better than raw web searches.

Why now?

To understand why now is the right time for voice driven interfaces, let’s think about this from a network bandwidth, compute and input / output quality lens:

Compute: Running speech recognition, conversion to text, output inference and conversion from text to voice requires a significant amount of compute due to the resource intensive nature of models behind them and the latency constraints. Modern day GPUs can handle these tasks more efficiently and at a larger scale than ever before, making real-time voice processing feasible for widespread use.

Network Bandwidth: Standard MP3-quality voice data transmits at about 128,000 bits per second which warrants internet speed of approximately 0.128 Mbps. Early internet connections, like dial-up, were painfully slow, operating at speeds around 56kbps. Downloading anything substantial, such as a song or a movie, could take hours. In contrast, the average internet speed in the U.S. in 2023 is 171.30 Mbps, and globally, the average speed has reached 45.6 Mbps, thus facilitating faster and reliable audio streaming.

Quality: Speech input (microphone) has taken massive leaps on sensitivity, noise cancellation and beam-forming which makes the microphone sensitive in the direction of speaker’s voice. For voice output, the quality and texture of sound from modern day consumer devices has significantly improved in terms of fidelity. Apple introduced spatial audio with dynamic 3D tracking for Airpods through a firmware update in September 2020, while Sony released the 360 Reality audio in late 2019. Such monumental changes in audio rendering open up the possibility for democratization of voice driven UIs.

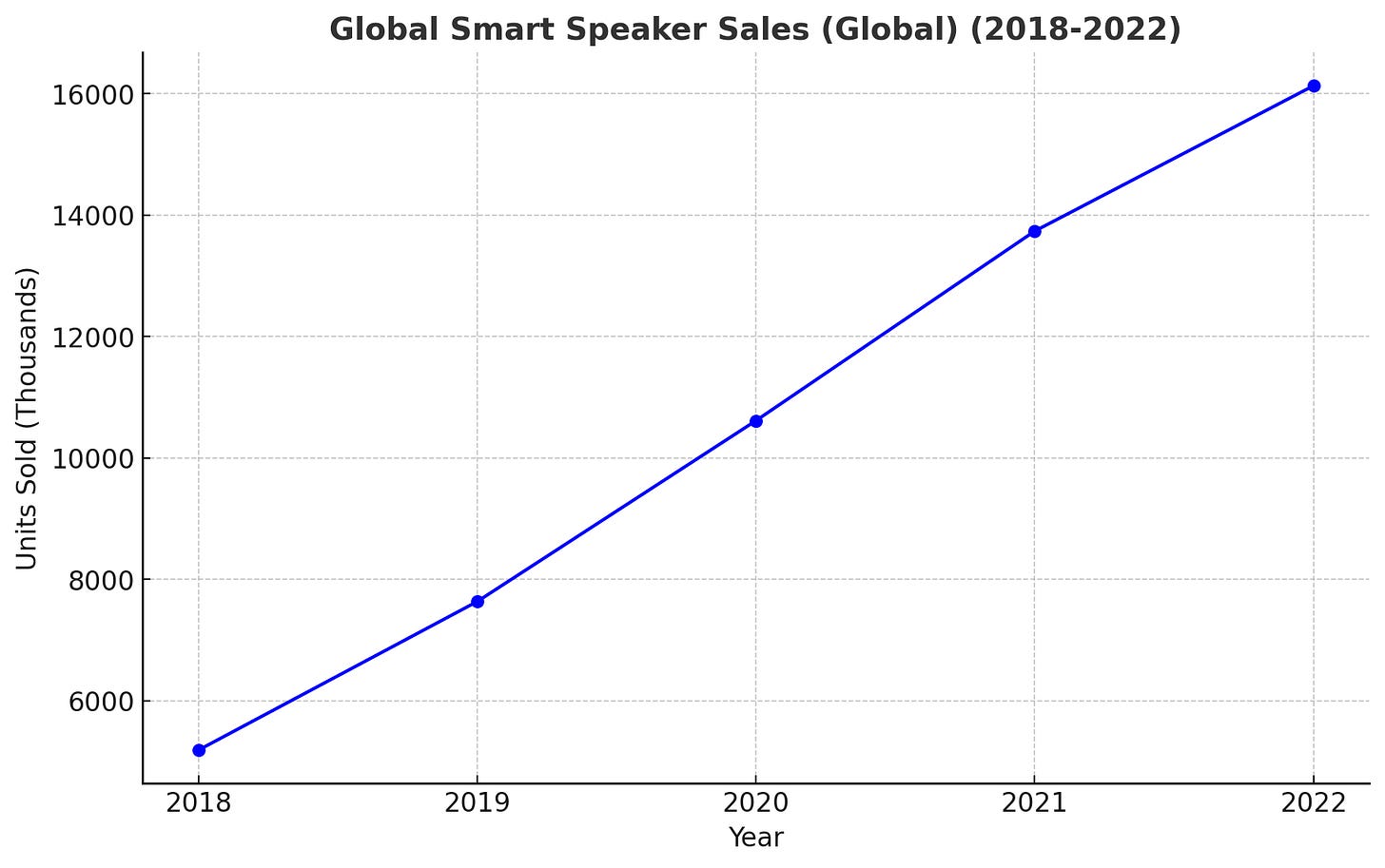

Ubiquitous devices: Voice driven interfaces cannot go through an inflection point on their own without a paradigm shift in hardware adoption. While, we’re more than a decade into the mobile wave, we’ve finally started seeing scale adoption for wireless earbuds, smart watches, smart home devices and may see a growing trend for smart glasses. Although, sales for gaming oriented virtual reality still sees a rather slow or flat growth curve, however, Apple’s step into mixed reality via the Vision Pro will be interesting to see. The graphs below showcase the last 5 - 6 years of active users / units sold for such devices which signifies a step adoption rate thus making a stronger case for apps that can now fully leverage voice capabilities.

The humane pin was launched recently by former Apple designers and pre-dominantly indexes on gesture (hand / palm pinches) and sound as a medium of interaction. While the product success is yet to be discovered, this is a great example of a device that introduces ubiquity and spatial audio while being powered with ChatGPT as it’s conversational interface that switches between a holographic style and a voice interface.

Enterprise Use Cases

The initial few iterations of voice driven UIs might very well be designed as co-pilots on top of existing visual interfaces as we’re seeing with most AI driven assistants today, however, over time, as the hardware adoption for ubiquitous devices becomes more mainstream, voice driven user interfaces may become more and more native to specific types of devices. Here’s a simple visual that illustrates the same for both external and internal use cases.

Consumer Use Cases

In the. consumer scenario, the easiest way to think about use cases is to think about any particular service that you seek from a professional such as a teacher / tutor, health practitioner, financial advisor, travel agent etc. The fundamental use case can involve the use of a conversational interfaces, since voice is the most natural medium of interaction that mimics how we interact with such personas in our present day life. The core benefits of having such specialized agents include on-demand access, which means that you would be able to talk to your AI therapist at 4 AM in the morning. Each of these patients will be incredibly knowledgeable, infinitely patient and empathetic which is the highest standard of what one could expect from this persona.

Underlying architecture

Given that large language models are very flexible, with the ability to solve a wide range of generative tasks, we’ve recently seen some great advancements in large language models focussed on speech understanding & generation. For eg. Google’s AudioPaLM fuses text-based and speech-based language models, PaLM-2 and AudioLM, into a unified multimodal architecture that can process and generate text and speech. The following is a representative architecture diagram of AudioPaLM. This may significantly streamline input & inference and based on recent research, automated speech recognition (ASR) models built by augmenting audio encoders in the input architecture seem to have a higher accuracy over text based models, especially for multi-lingual data which is a crucial aspect of design given the diversity in language spoken.

Prominent Companies

As of the writing of this article, the core innovation seems to be happening at the hardware & infrastructure layer while we’re yet to see more innovation on the end user experience layer:

Hardware layer: There almost seems to be a commoditization wave for hardware supporting spatial audio including earbuds, smart home devices, watches and smart glasses including the incumbents such as Apple, Google, Meta and Amazon. This is what is currently setting the precedent for a possible future wave of audio native AI services.

Software Infrastructure: This layer empowers the end user applications through voice transcription (speech to text), generation (text to speech), overlay & editing. This also seems to be a commoditized space but a lot of innovation is shifting from accuracy of output (which has been largely optimized for already), to personalization, building natural voice interfaces and multi-lingual support that helps improve efficacy of such interactions.

Broad AI Assistants: As of the date of this writing, Google Assistant, Alexa and Samsung’s Bixby are some of the most prominent AI assistants that have been around for a while. ChatGPT’s latest functionality for speech ingestion and generation is state of the art, especially with the efficiency, depth, verbosity and human like nature of the conversation that leverages GPT 4.

Enterprise Voice Assistants: While voice analytics tools for sales & support such as Gong, Cresta, Clari, Obersve.ai, kore.ai have been around for some time, we’re seeing new tools for complex meeting summarization such as Otter.ai & Fireflies as well as competition from incumbents such as the Zooms AI companion & Teams assistant.

Personal Voice Assistants: This area is a massive greenfield and we’re just starting to see more traction with AI doctors & speaking coaches such as Elsa AI

Thinking about the greenfield

While we might be at the inflection point for voice based interfaces to be more prominent, the following advancements are yet to be seen, and may bring tremendous value add:

Personalization: As of the writing of this article one can choose from 5 different tones within ChatGPT, however, deeper personalization may involve training models on a user defined tone and voice through historical data. Personalization in content can also stem from elongated context windows from past conversations and not merely the two custom instruction questions that ChatGPT allows a user to pre-fill today. This can be similar to how a personal tutor gets acquainted with a student’s style of listening or ingesting information by practicing visual learning methods, drawing common analogies to specific concepts, re-iteration etc. Moreover, Custom GPTs open up the possibilities to contextualized conversations via uploading pre-determined set of knowledge bases to achieve responses specific to the topic at hand.

Understanding non-verbal queues: Not all aspects of one’s dialogue can be expressed in verbal cues. Emotional queues, tonal variations and speech patterns can speak an additional 1000 words (metaphorically), and thus a plain speech to text conversation fed as model input for next token prediction has limited expression. Future voice models may include the ability of such interfaces to alter the response based on such cues to make responses more empathetic by adapting its tone, or altering the content to create a more natural and human-like conversation.

Continuous Ubiquity & Deep Integration: Similar to how Apple allows a user to switch Airpods between a Macbook and iPhone and use various apps (For eg. messaging) across devices, ubiquity in voice conversations can also be facilitated across several devices including wearables such as smart glasses, smart watches, mobile phones and laptops, easily allowing switching between text and voice based mediums, thus maintaining continuity of conversation / CoT. Ubiquity can also be achieved between different AI services where exposing context from one service may be beneficial for others. For eg. what you personal AI nutritionist recommends might be important for your AI doctor. However, this warrants a strong security framework to ensure user privacy and this broadly applies to all AI applications.