Autonomous Workflow Agents and the Generative AI Turbocharge

How generative AI can either turbocharge, or disrupt the existing craft of business workflow automation

A walk down the Visual Basic lane…

Business Process automation as a concept has been prevalent since the advent of modern day business applications. VB was first introduced in 1991 for developers. It was defined as beginners’ all-purpose symbolic instruction code and was a language that was used by users to interact with their computer both programmatically and visually. From inception to present date, we’ve seen several generations of tools and software platforms that have taken a stab at this problem, to achieve a vision of automating several manual processes in the enterprise, creating costs savings and increased business efficiency. The ethos of these products has centered around automating the mundane simple tasks involving data capture & entry workflows that are rules bases and don’t need complex human judgement or decision making. A commonly used term called "RPA” or “Robotic Process Automation” has now created a multi-billion dollar industry that aims to democratize workflow automation by automating such simple tasks. With the advent of AI, especially in the areas of computer vision and natural language processing (NLP), such tools have grown in terms of thir coverage of more complex use cases and data types. With the recent progress in large language models (LLMs), there’s a potential turbocharge awaiting such tools that involves semi-structured and unstructured data extraction, strengthened integration of legacy and new systems & complex human in the loop interfaces that unlock higher efficiencies. Let’s dive deeper into the landscape of tools, understand how a typical corporate workflow works, how these tools are designed from a product perspective, things they do well and don’t do well, and evaluate how those limitations and unexplored use cases can help generate enterprise value for customers and the companies serving them.

Understanding the current landscape

The existing landscape includes companies that have been in business for 35+ years to companies that have been incorporated in the past decade. Here’s a quick overview on the value chain:

Robotic Process Automation as an industry has surfaced post 2010 and comprises of big players such as UiPath, Automation Anywhere, Blueprism & several smaller players that have recently seen stiff competition from incumbents such as Microsoft via their Power Apps platform.

Integration Platforms such as TIBCO have existed for 2+ decades, but have been turbocharged with the advent of cloud computing, creating this new category of iPaaS tools which includes new entrants such as Workato, Mulesoft, Boomi and Zapier.

Process Mining and Discovery as an industry has surfaced in the past decade, as a result of business processes being purely managed on modern day CRM & ERP systems, and with the advent of automation, companies such as Celonis, Kryon, Pega and Signavio provide deeper insights into a business process, monitor an employee’s workflow to highlight bottlenecks and manual steps, which then eventually yields input to what should be automated using RPA & iPaaS.

Document Understanding has also been around the block for 3+ decades with traditional players such as ABBYY and Kofax but has been turbocharged with advancements in computer vision & NLP, giving birth to various generalized document understanding companies such as Hyperscience, Instabase, Google Document Understanding, Azure Forms Recognizer and even vertical-ized solutions such as Ocrulus, vic.ai and Klarity.

Conversational platforms such as NICE have been around since 1986, primarily for assisting contact centers in managing high volumes of customer support requests. The advent of AI and RPA tools has given birth to general conversational AI platforms such as Dialogflow (Google), yellow.ai, Kore.ai that build multi-modal conversational solutions across a wide variety of customer engagement use cases. Companies such as Observe.ai, Uniphore, AiSERA continue to provide specific solutions for contact centers via more modern interface and advanced voice inference techniques.

Understanding traditional enterprise operations

Having understood the landscape, let’s leverage a few infographics to understand how a typical enterprise operates and the various systems that govern the day to day operations to better understand the problems that these tools solve.

The typical modern day systems architecture leverages multi-channel systems of engagement to interact with customers to sell products or support existing products using websites, email, documents, chat / text & calls. The front office refers to the section of a company that manages customer interactions. Employees in the front office typically leverage systems of record such as ERP (Enterprise Resource Planning), CRM (Customer Relationship Management) and HCM (Human Capital Management) to interact with customers, store information regarding existing products and employees. Systems of action include common productivity and work management tools that are then used to take actionable next step from customer interactions and execute on those steps. The back office perform tasks that are essential to a company's operations to serve the actionable steps coming from those customer interactions. This part of the business also leverages systems of record and systems of action, however, they may also leverage various third party systems of reference which includes public and private databases to validate customer and other information. During the course of this business operation, companies have increasingly leveraged Systems of Insights to harness actionable insights from all the data that flows through the system

Head work versus hand work: A typical employee’s role can be broadly categorized into head work and hand work, where the head work involves the customer interactions, simple to complex decision making and analyzing insights. The hand work involves the manual work item selection, capturing data items from source systems, inputting them to target systems and submitting the finished work items. Most of the automation tools thus far have been able to largely automate the hand work due to its rules based, mundane & repetitive nature that doesn’t involve complex judgement & decision making.

Corporate Micro-processes: Several enterprises with complex processes typically architect the core process into further sub-processes delegated to specific employees with very clearly stipulated roles & responsibilities. As a representative example below, the input work from customers is typically acknowledged by Employee A that captures data, passes it onto employee B who then enters the actionable data into target systems and employee C who may engage in validating the data with third party systems of reference. There may be multiple lines of supervisors who may review & validate the work, and potentially re-send the work item back for correction. Once reviewed and validated, the work item is typically executed based on the inferred output.

Data types

Employees deal with several types of data that increases in complexity, from structured to semi-structured to completely unstructured data. This could be as simple as structured “key-value” pair data found in modern day applications, and in many cases, tons of legacy systems which are still surprisingly prevalent at the enterprise. However, a large proportion of the data is still captured in semi & unstructured formats such as documents which may be as simple as a tax form, invoice, purchase order, or as complicated as a contract, or an email. The latter is where the majority of the business and transactional information between customers and enterprises can be stored and isn’t something that existing tools have been able to extract well, with current capabilities.

What makes a great use case?

The best use cases for workflow automation fall into one of the following categories

Micro-Processing:

Large single process fragmented across multiple FTEs (full time employees). An example of this includes a financial trade settlement process within securities operations that takes roughly 3 days, involving multiple people & hand-offs.

One or several small processes conducted repeatedly by a large volume of FTEs. A very apt example includes driver onboarding for ride share companies that involves reading through onboarding documents such as a W-4, driver’s license and inputting information into the company’s internal systems

Semi-Structured & Unstructured Data:

While structured data can be easy to extract from the source systems using existing API integrations to GUI based automation approaches, the following sources of data create high levels of manual effort, thus making a great use case. Following are the high value data types:

Semi-structured documents with high variability such as invoices, order forms etc.

Unstructured documents with free form text entities such as revenue contracts that consist of the total contract value, payment schedule etc.

Legacy and Traditional Systems:

The disparity in systems architecture is a result of technical debt that an organization continues to build, as they adapt more and more business systems. However, the nature of such systems can be characterized by:

Legacy systems with little or no API capability such as mainframes and software built on a monolithic architecture.

Systems with limited APIs such as legacy versions of SAP, PeopleSoft or ones that operate on legacy SOAP based API architecture (Workday). Such systems may also have legacy UIs including thick clients built on .NET, or web applications built on older stacks that may not be the easiest to scrape programatically.

Making the business case

The key drivers that typically make a strong business case often involve the inability for several modern & legacy systems to integrate with each other via modern API based approaches, managing high volumes that is otherwise manually moved, reduction in existing human error rate and a requirement to adhere to strict SLAs. The outcomes of such drivers include the hard and easily quantifiable dollars such as FTE related costs savings and the reduction in cost of hiring and coordination between FTEs. However, most companies also realize soft dollars from increased customer engagement and reduced financial losses caused due to human error post automation.

Massive Industry opportunities

While business process automation primarily applies to almost all industries that involve corporate data & customer workflows, there are a few that truly standout. Based on how we define the use case, the two industries that stand out the most are Business Process Outsourcing (BPO) & Financial Services. They key reasons to why is this the case comes down to the following:

These industries have a ton of micro-processes. The BPO industry may have more of smaller mundane processes with a high volume, whereas the financial services industry has more complicated & long workflows.

They deal with a lot of semi structured and unstructured data, especially documents, emails and text messages / voice based inputs.

These 2 industries continue to maintain a mix of both legacy and new applications that are incredibly complex and hard to migrate from.

Product workflows for existing Business Process Automation platforms

Most existing products have similar workflows in terms of how they approach the process of identifying a use case, creating a low-code app that automates the process, deployment, interaction to monitoring & analysis.

Process Mining includes tracking of business systems event data such as Salesforce to create a process map that provides key metrics and insights into the steps undertaken, time spent at each step, potential bottlenecks etc. Process Discovery includes actual recording of the process steps on a click level to build a high level prototype of the automation based on the recording.

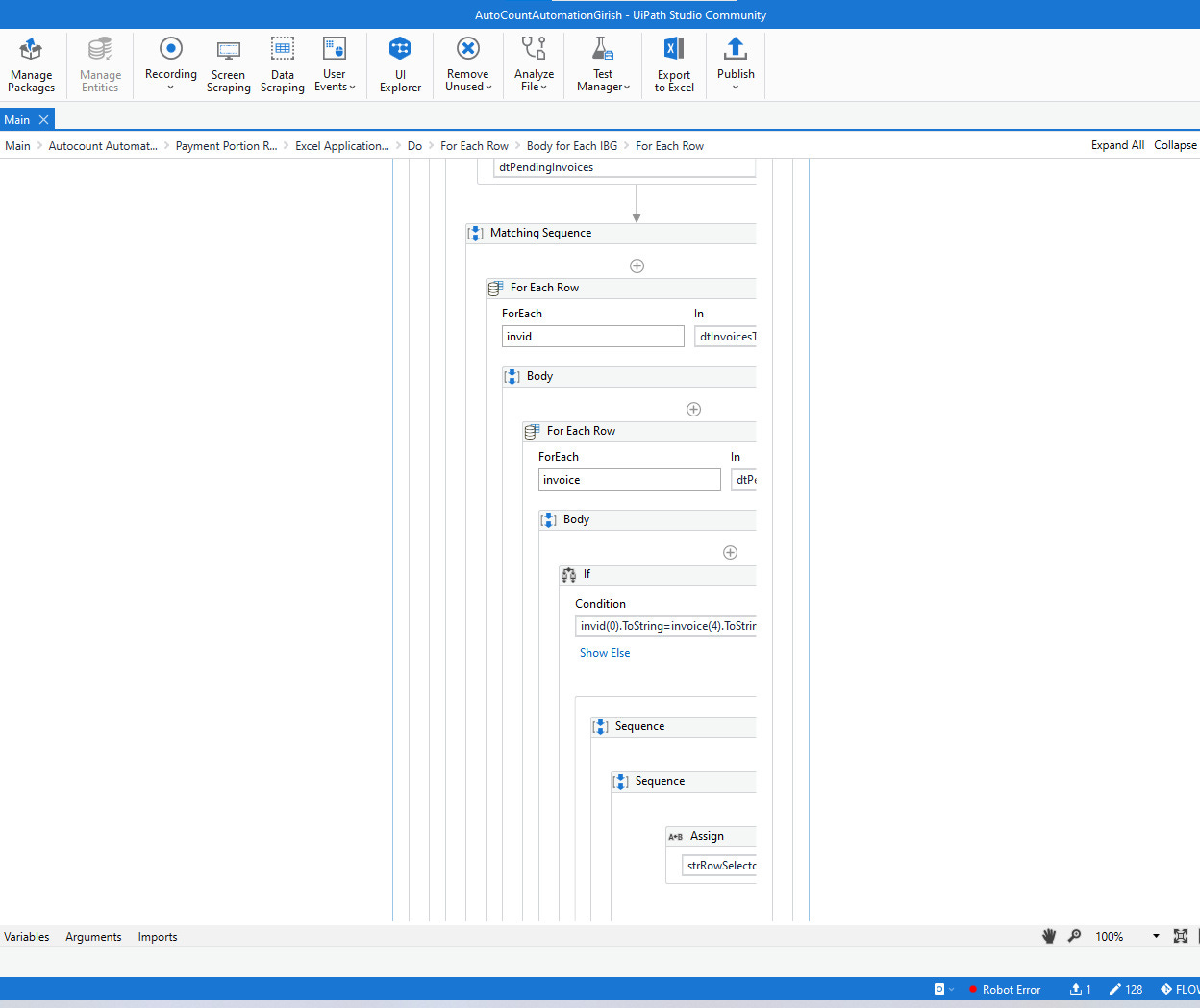

Low-Code / No Code Application Development involves the actual creation of a workflow automation, typical done via a simple drag and drop user interface that enables a user to import application integration packages, logical constructs or record UI based actions.

Deployment and Management comprises of tools that. help a user manage and monitor various automations that have been deployed to specific workstations.

Interaction and Monitoring empowers a user to interact with a co-pilot on their workstation that enables them to run various “bots” which they can interact with, to provide inputs or review outputs via tweak-able UIs that one can build within the platform.

Analyze & Visualize provides the capability for users to track / flag various data points that are being passed through over the course of the business automation, to dashboard specific analytics that provides both transactional insights for the process & business insights based on the data being actioned.

Evolution of the Business - IT Collaboration Model

The key forecasted value for solving via RPA from an overall ease of implementation perspective was that lots of applications do not have APIs and even if they do, most API integrations take a lot of time and specialized integration resources to build, which further adds cost of coordination between business & IT. To solve for this, most of the recent companies have spent tons of time and effort on the citizen development model, which dictates that a business user can build their own automation using the low-code / no-code workbench, thus reducing the amount of dependency on IT. However, most successful implementations have often needed IT involvement in a greater capacity, to eventually take the basic skeletons that business users build and harden them to run reliably over long periods of time. The existing citizen development model still requires heavy dependency on IT and external partners, who market the thousands of “RPA developers” that they have within the company, to be staffed on such projects.

What do existing tools do really well?

Looking at the first versions of visual basic, existing tools have brought the concept of automation to greater life in the modern digital enterprise in the following ways:

Intuitive drag and drop interfaces have truly simplified workflow automation for the mundane and repetitive tasks to constructs that don’t necessarily need complex programming paradigms. Building a bot is a more visual process and resonates well with business users, who tend to be more process oriented.

UI driven automation has come a long way and has been hardened over the years to detect complex UI components across a wide range of modern and legacy desktop and web applications in a relatively reliable DOM based mechanism.

Granular and diverse integration packages with modern day applications such as Salesforce, SAP, Workday provide users with out of the box capabilities to create simple workflow automations. Tools have been able to seamlessly mix API integration based packages with UI workflow tools to create a consistent developer experience.

Modern tools have been able to implement efficient orchestration on the cloud that can be scaled up or down to multiple instances based on the input workloads, and can also be deployed on-prem for customers with sensitive data thus managing and reducing the total cost of ownership and providing scalability.

Interactive application builders have created the ability for users to interact with a guided agent that sits on the user’s desktop and provides a human in the loop experience for complex workflows that involve human review steps.

What are the key gaps and how can AI help?

Process Discovery

Process Discovery: Despite such tools running in the background, process discovery is relatively slow, inefficient and rules based in terms of tracking a user’s actions, which thus generally creates a bad user experience and sometimes, inaccurate process maps. The initial automation prototype generated post process discovery is typically very bare bones and relatively non-functional, thus eventually requiring developer input to build complex logics to build a functional automation. Existing AI stacks offer multi-modal inputs that provide a probabilistic approach to ingest image, video & text based inputs while watching a user’s screen to understand / infer the overall scope of a workflow which thus creates the potential to build more than just a barebones workflow application. Having been trained on the whole internet also gives it broader context on how the same process may be done in similar settings, which further helps contextualize the process in scope

Low-Code / No-Code Workbench & Systems Integration

Despite the low-code nature of the workflow, overall development still takes time and can become increasingly arduous as the workflows start requiring complex logical constructs. Most workflows rely on static logics that have to be pruned / hardened over a period of time based on runtime results & added exceptions as the workflow processes more transactions. Such refactoring eventually ends up requiring incremental development effort to embed more complicated logics / edge cases to maintain the workflow. Analogous solutions to what we have today for Github co-pilot could very well extend to such work-benches.

Furthermore, UI based automation workflows tend to break frequently, due to the constantly changing UI of the source systems, and the existing rules based DOM mechanism for detecting specific UI components has limited capacity to ingest such changes. Newly introduced models specialized in image detection w/ multi-shot prompting & modern reinforcement learning approaches provide a compelling path for users to seamlessly communicate such edge cases and UI changes to automations for refactoring to become a more seamless process.

Semi-Structured & Unstructured Data

Documents, be it digital or scanned represent a major proportion of an enterprise workflow. The variability in “keys” and their respective positioning on a page in semi-structured documents has thus far been a considerable barrier for accurate data extraction, especially for use cases such as accounts payables and receivables which involve financial transactions that warrant accuracy and thus, existing tools end up requiring significant human review. The capability for an LLM to understand numerous textual and spatial variations of a search term via embeddings will help turbocharge the extraction and improve accuracy of such workflows.

However, the maximum impact for LLMs, would apply to free form unstructured text in documents such as legal contracts, wherein, extracting revenue amounts, terms and schedule for payment may imply significant cost savings. Existing tools have tried to embed NLP models available from their marketplaces, but these models have often had to be fine-tuned or trained on specific data which is time consuming.

Adaptive Workflows based on Business Insights

Existing tools deliver relatively deterministic insights, with maybe some forecasting functionalities, via dashboards, which are limited to numeric data. There is however, a ton of scope for textual and image based insights, beyond numbers that could paint a much more insightful picture into the automated workflow. Taking an example of an accounts payable specialist processing transactions on a week by week basis, the capability to flag a payment transaction for a potentially higher than stipulated value for a specific vendor, based on contextual understanding of the underlying contract terms of payment and anomalies based on historical payment data, is an example of truly adaptive automation, driven by text based and historical insights. Existing tools do provide model plug-ins to do so, but LLMs provide the capability of deeply integrating such functionalities into existing model workflows.

Workflow Marketplace

While most companies currently operate marketplaces to share automation between various developers to create a developer ecosystem and streamline adoption of the platform, except the truly API based integrations that are more robust and can truly be used in a plug & play format, any automation that’s build using the native workbench’s logical constructs and UI driven screen recordings cannot be used out-of-the-box and requires significant re-factoring to make it work for the specific user scenario. This is mostly because the OOTB automation lacks context of the user process and their underlying system nuances. For example, a UI automation built on Salesforce lightening may almost certainly not work on Salesforce classic. Existing AI techniques can potentially empower users to easily refactor existing automations hosted on marketplaces to create true interoperability. Moreover, the concept of prompting creates a whole new paradigm for marketplaces, wherein sharing prompts might be a helpful way for developers, than sharing low code.

Human in the loop

While existing tools have provided seamless methods to create human-in-the-loop style automations, most of the existing human in the loop interfaces to validate, fine tune and submit the output of existing deterministic workflow automations do not have any reinforcement learning tied to them. The modern reinforcement learning frameworks can be potentially implemented to improve this back and forth workflow and reduce the total time that an operator would spend on reviewing workflow outputs from a software bot.

Multi-Modal Interactions

While interacting with visual forms and trigger based approaches is the easiest way to initiate workflow automations, chat and / or voice based interaction interfaces are still lacking in existing tools. This is where zero to few-shot prompting become very compelling use cases for collecting user input and triggering automated workflows

What are some key considerations for enterprise adoption?

While consumer adoption has grown tremendously over the past few months, we’re finally starting to see enterprises adopting the new stack for specific workflows. However, concept to mass scale adoption will require the following:

Private / Hybrid Deployments: For heavily regulated industries such as banking, it is quintessential for customer data to stay on-premise and be processed locally, while the overall orchestration, development and management can occur on the public cloud. This may require such solutions to deploy and run models locally and deploy model updates on a frequent basis. As always, companies need to ensure that they follow enterprise grade encryption protocols and go through regular SOC 2 & GDPR audits.

Data sharing & business models: There will still be customers who may be open to sharing data (especially types that are not sensitive), such that they can leverage features that deliver higher value from updated and fine tuned models, arising from ingesting the shared data. Some creative business models may involve give price credits for customer to share data to further incentivize them to share data. This is yet to be seen

Bull case versus bear case

Turbocharging existing & new companies

The bull case for generative AI turbocharging existing software platforms may essentially come from one of many of the components mentioned above wherein specific use cases that are now solvable with the modern AI architecture unlock massive enterprise value via product changes that integrate existing functionalities with such new AI approaches, or rebuilding parts of the product natively using an AI first approach to create ease of use. The latter will be more prevalent in new companies than old due to the classic innovator’s dilemma. This however, does require re-investing the stack in some specific areas of the platform, while cleverly integrating in the other areas.

Disrupting existing companies

The bear case is primarily governed by the possibility that over the next few years, we may see a flurry of multi-modal interfaces that embed, chat, voice & GUI based approaches to build more AI native methods of interaction, wherein both existing and new vendors for the underlying systems in scope of a workflow may embed co-pilots in existing application layers that can seamlessly talk to other systems via API and visual scraping capabilities. The API revolution was a great historical step towards creating a more connected enterprise systems ecosystem and natively embedded co-pilots may establish a new paradigm, thus making existing automation tools less valuable. That still doesn’t entirely eliminate the need of such tools since enterprises move & adopt slowly, continue to have tons of unstructured data and continue to gather technical debt with multiple systems that may not talk to each other.

References and Motivation

The above is a summary of my thoughts from spending 5 years in the beautiful trenches of working in the business process automation space both on simple & mundane, as well as complicated workflow automations, which gave me a deep understanding of how middle and back offices of such organizations function, their systems & process architecture, how they implement existing workflow solutions and manage them.

Great post Sid!